For more detailed information about our tests, please see below.

All Password tests have been designed to be aligned to the CEFR (The Common European Framework of Reference for Languages: Learning, Teaching, Assessment).

The CEFR is an initiative of the Council of Europe to describe the language ability of learners of foreign languages. It describes this ability on a six point scale from A1 for beginners up to C2 for those with mastery of a language and is the international standard for describing language levels.

For more information on the CEFR see:

www.coe.int/en/web/common-european-framework-reference-languages

www.englishprofile.org/images/pdf/GuideToCEFR.pdf

All Password tests have been formally aligned to the CEFR following the procedures laid out in “Relating Language Examinations to the ‘Common European Framework of Reference for Languages: Learning, Teaching, Assessment’ (CEFR). A Manual“, see www.coe.int/en/web/common-european-framework-reference-languages/relating-examinations-to-the-cefr.

The alignment is undertaken by an independent panel of 10 or more English language education experts with many years of experience chaired by a language testing expert. The process covers:

Re-familiarisation with the CEFR, theoretical & practical

Individual consideration of test items assigning CEFR level and test cut (boundary) scores

Small group discussion and reconsideration of judgements

Panel discussion and reconsideration of judgements

Analysis and formalisation of results

Report production and publication

For more information on the formal alignment of Password tests to the CEFR please see the two documents:

Password English Tests

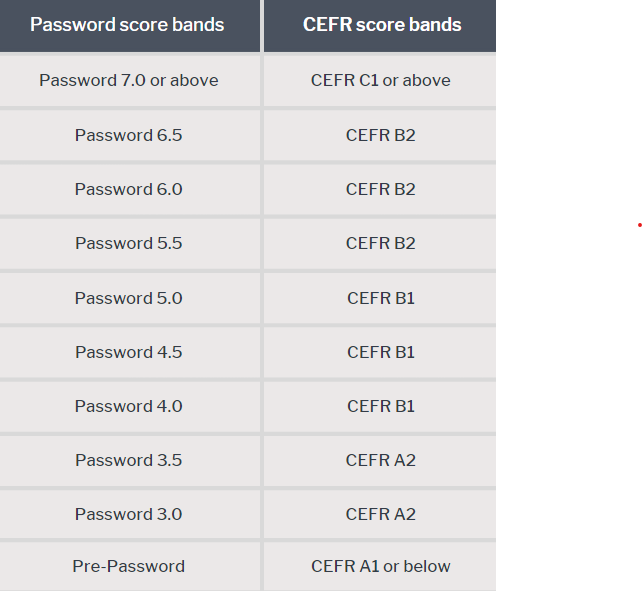

The broad Password test CEFR bands are broken down into more precise Password band scores are as below.

Main suite CEFR A2-C1 Password tests:

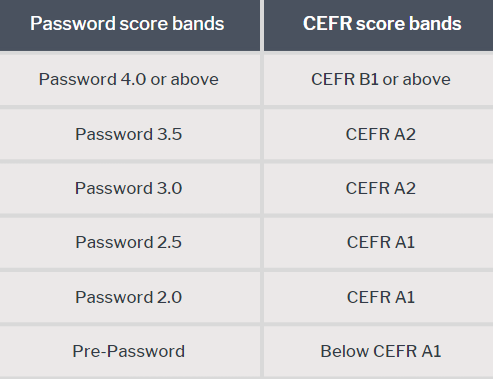

Intro and Younger CEFR A1-B1 Password tests:

The “or above” is used as a top score as because while the score is at least 7.0/ C1 or 4.0/ B1 (depending on the test), however, it could be considerably over this and so a precise Password score is not given. Password tests are less accurate at 7.5/ 5.5 and higher levels as they are beyond their design specification.

“Pre-Password” is below the lower end of scoring.

Password Maths tests

Password Maths tests give a band score of A to F (with A being the highest).

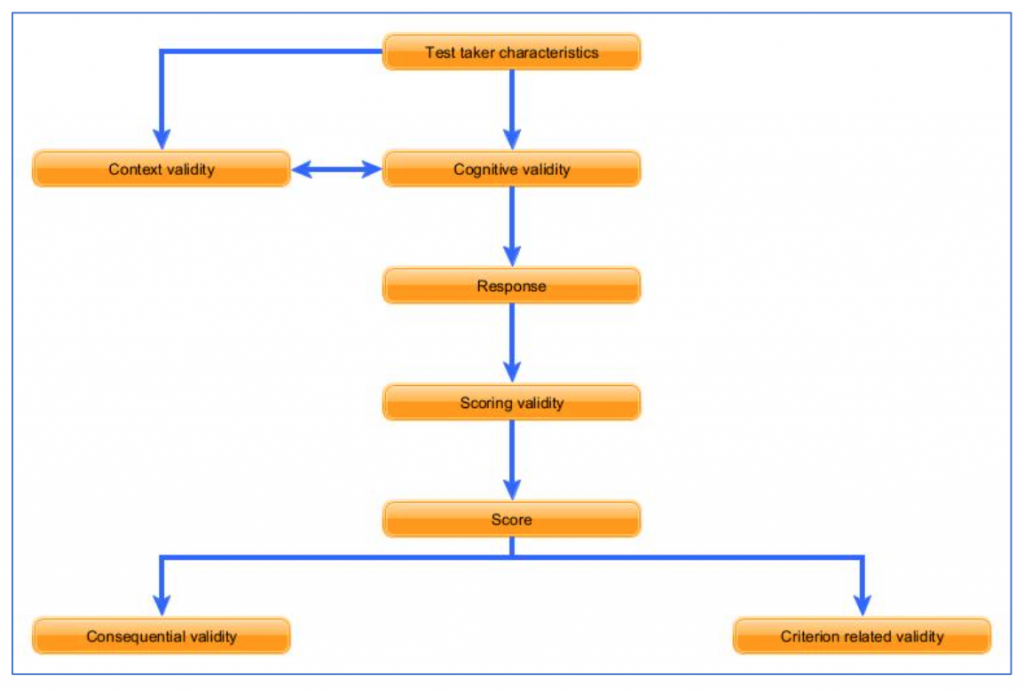

Password English tests are based on Weir’s highly influential socio-cognitive framework (see diagram below), conceived by Professor Cyril Weir in 2005 and further developed by the team at CRELLA. It is now widely used to inform language test development, research and validation. The framework combines social, cognitive and evaluative (scoring) dimensions of language use and links these to the context and consequences of test use.

See www.beds.ac.uk/crella/about/socio-cognitive-framework for more information on Weir’s socio-cognitive framework.

For an example of how a Password test is designed and developed, please see here.

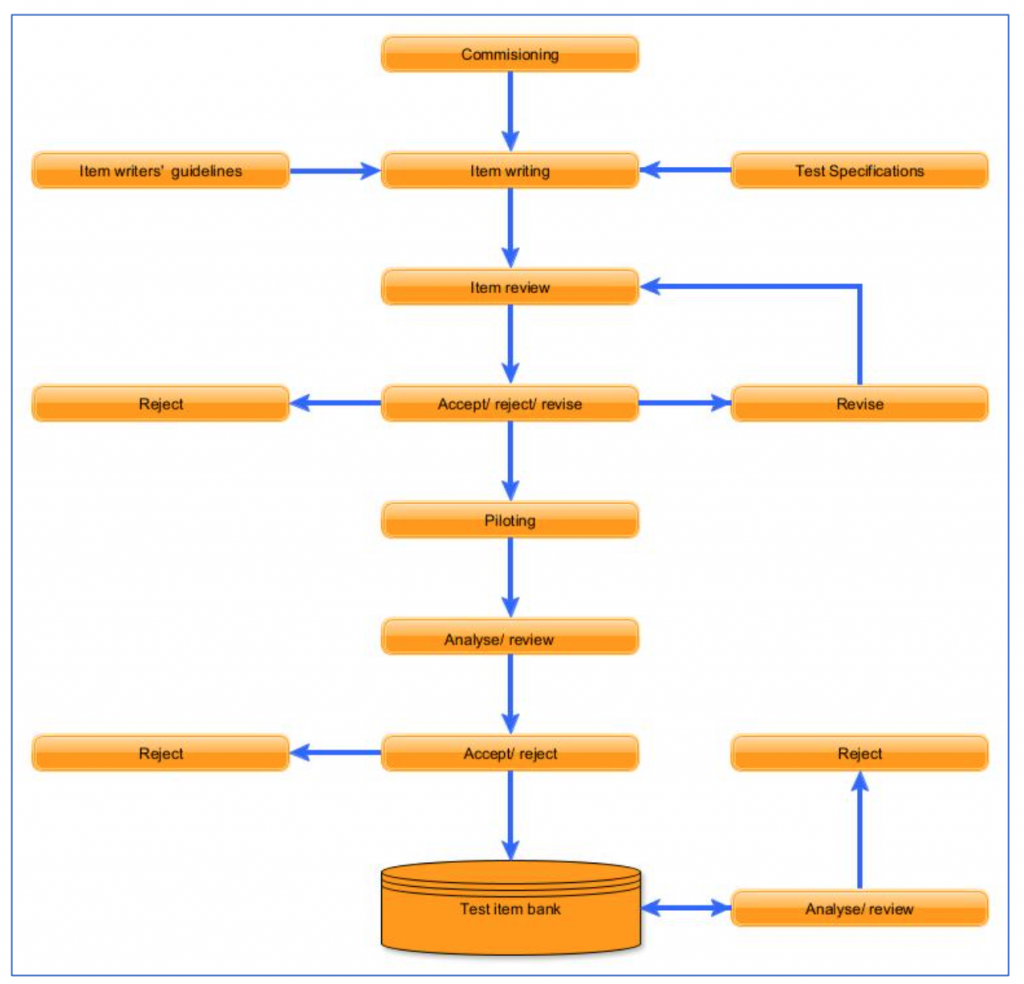

The table below shows how Password items, including tasks and questions, are designed and developed.

Test specifications: Detailed Password test specifications ensure that tests and items (questions) are appropriate for their intended purpose and consistent.

Item writing: Test items are written by trained item writers with qualifications in teaching English as a foreign language following the test detailed specifications which are further informed by item writers guidelines.

Item review: New items are reviewed to ensure they conform to the test specification, are clear and unambiguous, and are of a sufficient quality. Items may be accepted, rejected or revised at this stage.

Piloting: To ensure that test items and tests are of consistent difficulty, Password English language test items are extensively piloted (trialled) before use in a test. This piloting may be either as non-scoring items in a test or in specially arranged testing sessions.

Post-piloting analysis: After each piloting statistical analysis is carried out to confirm that new test items are performing as expected over a broad range of test takers (of various first languages) and where appropriate assign a precise difficulty level. Items may be accepted or rejected at this stage.

Ongoing analysis and review: Analysis is performed to monitor the performance of items.